|

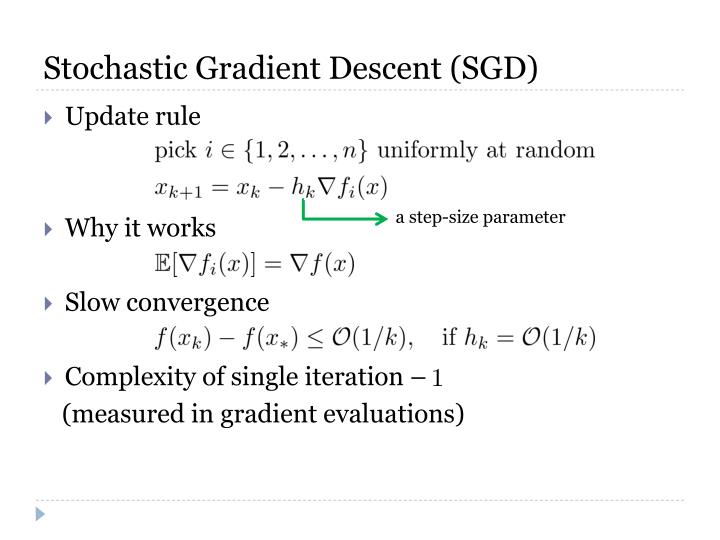

How would we go about parallelizing SGD? One could imagine an approach in which we take snapshot reads of the parameter vector, and periodically merge updates based on a given read in a synchronization step. So we cannot just naively execute a series of update operations in parallel. Each update operation involves both 1) reading the current parameter vector θ, and 2) writing to θ a modified value. Note that stochastic gradient descent, as we have described it thus far, is a sequential algorithm. In practice, batching can lead to a more stable trajectory than in SGD, and, perhaps surprisingly, better performance as well, given that the gradient computation is properly vectorized. Some definitions of SGD actually refer to mini-batch gradient descent. Mini-batch gradient descent uses a randomly selected subset, or mini-batch, of \(b\) training examples at each iteration, instead of just one. Though it may not be immediately obvious, theory assures us that if the learning rate γ is reduced appropriately over time, and the cost function satisfies certain properties (i.e. convexity), stochastic gradient descent will also converge.įinally, it is worth noting that there is a middle-ground between gradient descent and stochastic gradient descent, called mini-batch gradient descent. With proper tuning of the learning rate, however, stochastic gradient descent will approach the same minimum as gradient descent. Even if the error on one particular training example is reduced, it is possible (and in the beginning, almost as likely) that the error on the entire training set will increase. Figure 2: Unlike in gradient descent, the value of the cost function does not necessarily decrease with each iteration in stochastic gradient descent. Most data sets require between 1 to 10 runs though the inner loop.

If the number of training examples is huge, a single iteration through the training examples can suffice. Note that, in practice, the number of times we have to run the inner loop depends on the size of the training set. Here’s a common cost function used with gradient descent: $$ This discrepancy is commonly termed “cost” or “loss” and is quantified in a cost function. Gradient descent is an algorithm that iteratively tweaks a model’s parameters, with the goal of minimizing the discrepancy between the model’s predictions and the “true” labels associated with a set of training examples. Let’s begin with stochastic gradient descent’s predecessor and cousin: gradient descent. Some examples of optimization algorithms include gradient descent, the conjugate gradient method, BFGS, and L-BFGS. Optimization algorithms are the means used to find these optimal parameters, and develop robust models. Training involves finding values for a model’s parameters, θ, such that two, often conflicting, goals are met: 1) error on the set of training examples is minimized, and 2) the model generalizes to new data. In machine learning, what exactly does an optimization algorithm optimize?Īs you may know, supervised machine learning generally consists of two phases: 1 training (generating a model) and inference (making predictions with that model). Stochastic gradient descent is an optimization algorithm.

To orient a discussion of these papers, I thought it would be useful to dedicate one blog post to briefly developing stochastic gradient descent from “first principles.” This discussion is supposed to be illustrative, and errs in favor of pedagogical clarity, over mathematical rigor. Not surprisingly, three out of the four papers I just referenced came out of industry research. Parallelizing important optimization algorithms, like SGD, is the key to fast training and, by extension, fast model development. This topic isn’t just a theoretical curiosity. Many machine learning papers reference various flavors of stochastic gradient descent (SGD) - parallel SGD, asynchronous SGD, lock-free parallel SGD, and even distributed synchronous SGD, to name a few. “Nearly all of deep learning is powered by one very important algorithm: stochastic gradient descent.” - Ian Goodfellow A Brief Primer: Stochastic Gradient Descent

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed